TextGraphs: raw texts, LLMs, and KGs, oh my!¶

Welcome to the TextGraphs library...

- demo: https://huggingface.co/spaces/DerwenAI/textgraphs

- code: https://github.com/DerwenAI/textgraphs

- biblio: https://derwen.ai/docs/txg/biblio

- DOI: 10.5281/zenodo.10431783

Overview¶

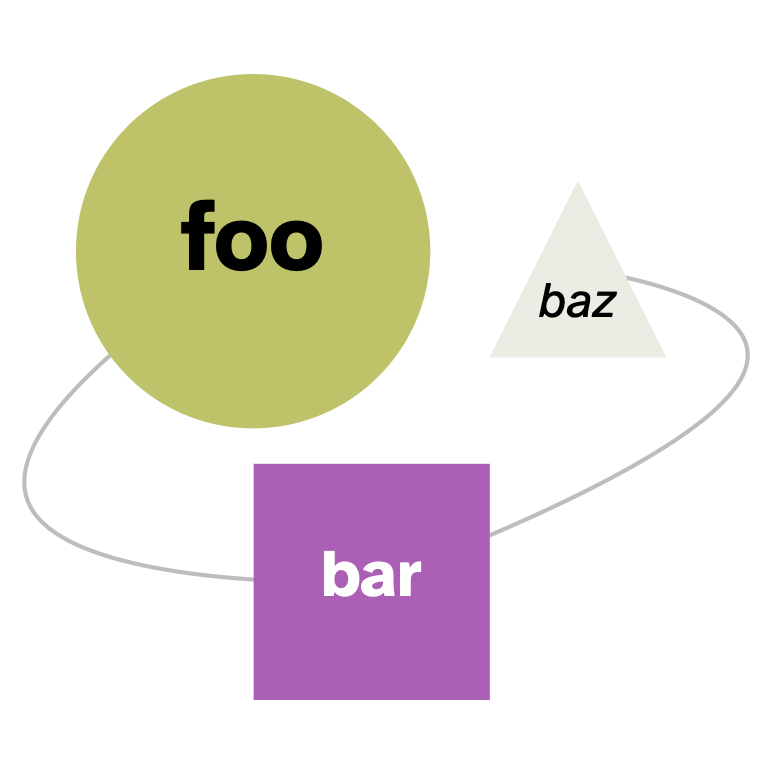

Explore uses of large language models (LLMs) in semi-automated knowledge graph (KG) construction from unstructured text sources, with human-in-the-loop (HITL) affordances to incorporate guidance from domain experts.

What is "generative AI" in the context of working with knowledge graphs? Initial attempts tend to fit a simple pattern based on prompt engineering: present text sources to a LLM-based chat interface, asking to generate an entire graph. This is generally expensive and results are often poor. Moreover, the lack of controls or curation in this approach represents a serious disconnect with how KGs get curated to represent an organization's domain expertise.

Can the definition of "generative" be reformulated for KGs? Instead of trying to use a fully-automated "black box", what if it were possible to generate composable elements which then get aggregated into a KG? Some research in topological analysis of graphs indicates potential ways to decompose graphs, which can then be re-composed probabilistically. While the mathematics may be sound, these techniques need to be understood in the context of a full range of tasks within KG-construction workflows to assess how they can apply for real-world graph data.

This project explores the use of LLM-augmented components within natural language workflows, focusing on small well-defined tasks within the scope of KG construction. To address challenges in this problem, this project considers improved means of tokenization, for handling input. In addition, a range of methods are considered for filtering and selecting elements of the output stream, re-composing them into KGs. This has a side-effect of providing steps toward better pattern identification and variable abstraction layers for graph data, for graph levels of detail (GLOD).

Many papers aim to evaluate benchmarks, in contrast this line of inquiry focuses on integration: means of combining multiple complementary research projects; how to evaluate the outcomes of other projects to assess their potential usefulness in production-quality libraries; and suggested directions for improving the LLM-based components of NLP workflows used to construct KGs.

Index Terms¶

natural language processing, knowledge graph construction, large language models, entity extraction, entity linking, relation extraction, semantic random walk, human-in-the-loop, topological decomposition of graphs, graph levels of detail, network motifs,