Leveraging Domain Expertise

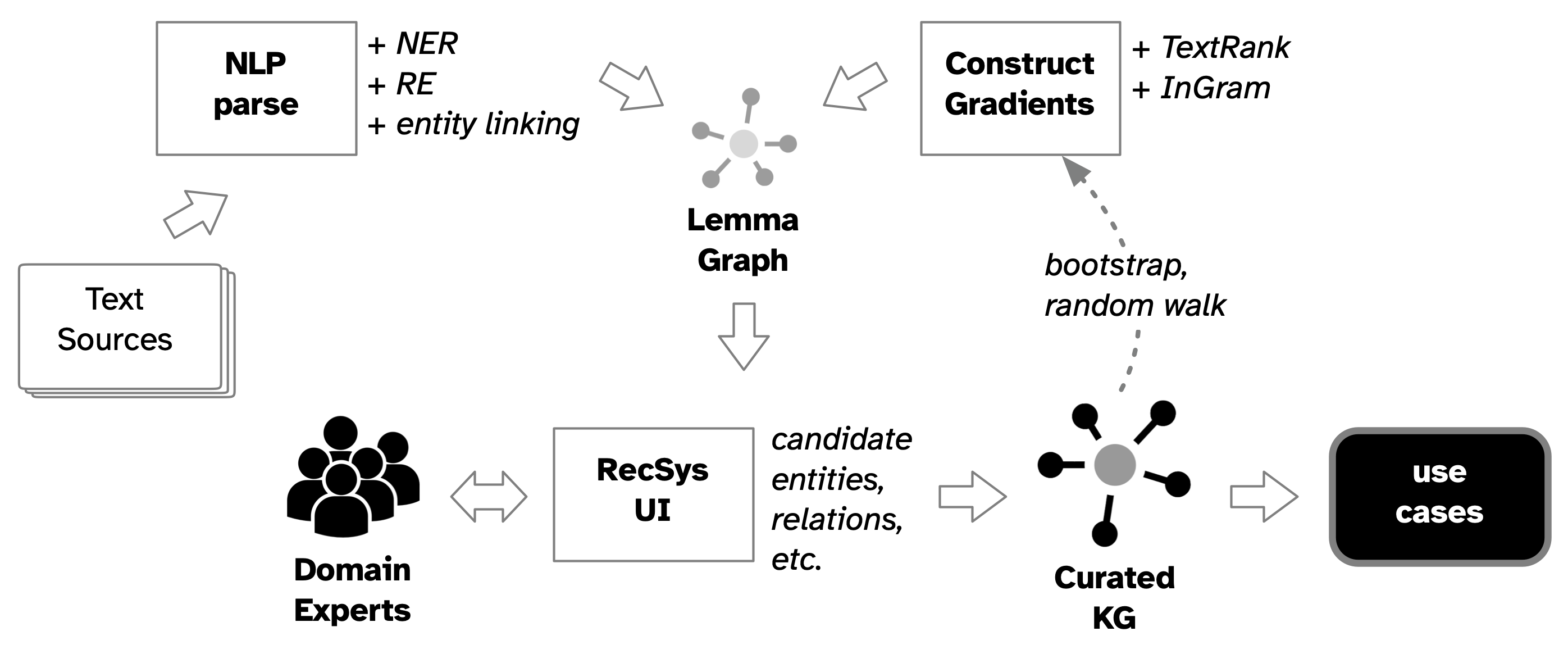

Rather than fully automatic KG construction, this approach emphasizes means of incorporating domain experts through "human-in-the-loop" (HITL) techniques.

Multiple techniques can be employed to construct gradients for both the generated nodes and edges, starting with the quantitative scores from model inference.

- gradient for recommending extracted entities: named entity recognition, textrank, probabilistic soft logic, etc.

- gradient for recommending extracted relations: relation extraction, graph of relations, etc.

Results extracted from lemma graphs provide gradients which can be leveraged to elicit feedback from domain experts:

- high-pass filter: accept results as valid automated inference

- low-pass filter: reject results as errors and noise

For the results which fall in-between, a recsys or similar UI can elicit review from domain experts, based on active learning, weak supervision, etc. see https://argilla.io/

subsequent to the HITL validation, the more valuable results collected within a lemma graph can be extracted as the primary output from this approach.

Based on a process of iterating through a text document in chunks, the results from one iteration can be used to bootstrap the lemma graph for the next iteration. this provides a natural means of accumulating (i.e., aggregating) results from the overall analysis.

By extension, this bootstrap/accumulation process can be used in the distributed processing of a corpus of documents, where the "data exhaust" of abstracted lemma graphs used to bootstrap analysis workflows effectively becomes a knowledge graph, as a side-effect of the analysis.