Note

To run this notebook in JupyterLab, load examples/ex6_0.ipynb

Graph algorithms with networkx¶

Once we have linked data represented as a KG, we can begin to use graph algorithms and network analysis on the data. Perhaps the most famous of these is PageRank which helped launch Google, also known as a stochastic variant of eigenvector centrality.

We'll use the networkx library to run graph algorithms, since rdflib lacks support for this.

Note that in networkx an edge connects two nodes, where both nodes and edges may have properties.

This is effectively a property graph.

In contrast, an RDF graph in rdflib allows for multiple relations (predicates) between RDF subjects and objects, although there are no values represented.

Also, networkx requires its own graph representation in memory.

Based on a branch of mathematics related to linear algebra called algebraic graph theory, it's possible to convert between a simplified graph (such as networkx requires) and its matrix representation.

Many of the popular graph algorithms can be optimized in terms of matrix operations – often leading to orders of magnitude in performance increases.

In contrast, the more general form of mathematics for representing complex graphs and networks involves using tensors instead of matrices.

For example, you may have heard that word tensor used in association with neural networks?

See also:

- https://towardsdatascience.com/10-graph-algorithms-visually-explained-e57faa1336f3

- https://web.stanford.edu/class/cs97si/06-basic-graph-algorithms.pdf

- https://networkx.org/documentation/stable/reference/algorithms/index.html

First, let's load our recipe KG:

from os.path import dirname

import kglab

import os

namespaces = {

"nom": "http://example.org/#",

"wtm": "http://purl.org/heals/food/",

"ind": "http://purl.org/heals/ingredient/",

"skos": "http://www.w3.org/2004/02/skos/core#",

}

kg = kglab.KnowledgeGraph(

name = "A recipe KG example based on Food.com",

base_uri = "https://www.food.com/recipe/",

namespaces = namespaces,

)

kg.load_rdf(dirname(os.getcwd()) + "/dat/recipes.ttl") ;

The kglab.SubgraphMatrix class transforms graph data from its symbolic representation in an RDF graph into a numerical representation which is an adjacency matrix.

Most graph algorithm libraries such as NetworkX use an adjacency matrix representation internally.

Later we'll use the inverse transform in the subgraph to convert graph algorithm results back into their symbolic representation.

First, we'll define a SPARQL query to use for building a subgraph of recipe URLs and their related ingredients.

Note the bindings subject and object – for subject and object respectively.

The SubgraphMatrix class expects these in the results of a SPARQL query used to generate a representation for NetworkX.

sparql = """

SELECT ?subject ?object

WHERE {

?subject rdf:type wtm:Recipe .

?subject wtm:hasIngredient ?object .

}

"""

Now we'll use the result set from this query to build a NetworkX subgraph.

Formally speaking, all RDF graphs are directed graphs since the semantics of RDF connect a subject through a predicate to an object.

We use a DiGraph for a directed graph.

Note: the bipartite parameter identifies the subject and object nodes to be within one of two bipartite sets – which we'll describe in more detail below.

import networkx as nx

subgraph = kglab.SubgraphMatrix(kg, sparql)

nx_graph = subgraph.build_nx_graph(nx.DiGraph(), bipartite=True)

Graph measures¶

One simple measure in networkx is to use the density() method to calculate graph density.

This is a ratio of the edges in the graph to the maximum possible number of edges it could have.

A dense graph will tend toward a density measure of the 1.0 upper bound, while a sparse graph will tend toward the 0.0 lower bound.

nx.density(nx_graph)

0.016513480392156863

While interpretations of the density metric depends largely on context, here we could say that the recipe-ingredient relations in our recipe KG are relatively sparse.

To perform some kinds of graph analysis and traversals, you may need to convert the directed graph to an undirected graph.

For example, the following code uses bfs_edges() to perform a bread-first search (BFS) beginning at a starting node source and search out to a maximum of depth_limit hops as a boundary.

We'll use butter as the starting node, which is a common ingredient and therefore should have many neighbors. In other words, collect the most immediate "neighbors" for butter from the graph:

butter_node = kg.get_ns("ind").Butter

butter_id = subgraph.transform(butter_node)

edges = list(nx.bfs_edges(nx_graph, source=butter_id, depth_limit=2))

print("num edges:", len(edges))

num edges: 0

Zero. No edges were returned, and thus no neighbors were identified by BFS.

So far within the recipe representation in our KG, the butter ingredient is a terminal node, i.e., other nodes connect to it as an object.

However, it never occurs as the subject of an RDF statement, so butter in turn does not connect into any other nodes in a directed graph – it becomes a dead-end.

Now let's use the to_undirected() method to convert to an undirected graph first, then run the same BFS again:

for edge in nx.bfs_edges(nx_graph.to_undirected(), source=butter_id, depth_limit=2):

s_id, o_id = edge

s_node = subgraph.inverse_transform(s_id)

s_label = subgraph.n3fy(s_node)

o_node = subgraph.inverse_transform(o_id)

o_label = subgraph.n3fy(o_node)

# for brevity's sake, only show non-butter nodes

if s_node != butter_node:

print(s_label, o_label)

<https://www.food.com/recipe/100230> ind:AllPurposeFlour

<https://www.food.com/recipe/100230> ind:CowMilk

<https://www.food.com/recipe/100230> ind:Salt

<https://www.food.com/recipe/100230> ind:VanillaExtract

<https://www.food.com/recipe/100230> ind:WhiteSugar

<https://www.food.com/recipe/101876> ind:Water

<https://www.food.com/recipe/101876> ind:WholeWheatFlour

<https://www.food.com/recipe/103073> ind:ChickenEgg

<https://www.food.com/recipe/103964> ind:BlackPepper

<https://www.food.com/recipe/104441> ind:Garlic

<https://www.food.com/recipe/104441> ind:OliveOil

<https://www.food.com/recipe/138985> ind:BrownSugar

<https://www.food.com/recipe/141995> ind:AppleCiderVinegar

<https://www.food.com/recipe/268209> ind:Honey

Among the closest neighbors for butter we find salt, milk, flour, sugar, honey, vanilla, etc.

BFS is a relatively quick and useful approach for building discovery tools and recommender systems to explore neighborhoods of a graph.

Given how we've built this subgraph, it has two distinct and independent sets of nodes – namely, the recipes and the ingredients. In other words, recipes only link to ingredients, and ingredients only link to recipes. This structure fits the formal definitions of a bipartite graph, which is important for working AI applications such as recommender systems, search engines, etc. Let's decompose our subgraph into its two sets of nodes:

from networkx.algorithms import bipartite

rec_nodes, ind_nodes = bipartite.sets(nx_graph)

print("recipes\n", rec_nodes, "\n")

print("ingredients\n", ind_nodes)

recipes

{0, 7, 10, 12, 14, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33, 34, 35, 38, 39, 40, 41, 42, 43, 44, 45, 46, 47, 48, 49, 50, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71, 72, 73, 74, 75, 76, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 90, 91, 92, 93, 94, 95, 96, 97, 98, 99, 100, 101, 102, 103, 104, 105, 106, 107, 108, 109, 110, 111, 112, 113, 114, 115, 116, 117, 118, 119, 120, 121, 122, 123, 124, 126, 127, 128, 129, 130, 131, 132, 133, 134, 135, 136, 137, 138, 139, 140, 141, 142, 143, 144, 145, 146, 147, 148, 149, 150, 151, 152, 153, 154, 155, 156, 157, 158, 159, 160, 161, 162, 163, 164, 165, 166, 167, 168, 169, 170, 171, 172, 173, 174, 175, 176, 177, 178, 179, 180, 181, 182, 183, 184, 185, 186, 187, 188, 189, 190, 191, 192, 193, 194, 195, 196, 197, 198, 199, 200, 201, 202, 203, 204, 205, 206, 207, 208, 209, 210, 211, 212, 213, 214, 215, 216, 217, 218, 219, 220, 221, 222, 223, 224, 225, 226, 227, 228, 229, 230, 231, 232, 233, 234, 235, 236, 237, 238, 239, 240, 241, 242, 243, 244, 245, 246, 247, 248, 249, 250, 251, 252, 253, 254, 255}

ingredients

{1, 2, 3, 4, 5, 6, 37, 8, 9, 36, 11, 13, 15, 16, 51, 125}

If you remove the if statement from the BFS example above that filters output, you may notice some "shapes" or topology evident in the full listing of neighbors. In other words, BFS search expands out as butter connects to a set of recipes, then those recipes connect to other ingredients, and in turn those ingredients connect to an even broader set of other recipes.

We can measure some of the simpler, more common topologies in the graph by using the triadic_census() method, which identifies and counts the occurrences of dyads and triads:

for triad, occurrences in nx.triadic_census(nx_graph).items():

if occurrences > 0:

print("triad {:>4}: {:7d} occurrences".format(triad, occurrences))

triad 003: 2564784 occurrences

triad 012: 123660 occurrences

triad 021D: 2025 occurrences

triad 021U: 73051 occurrences

See "Figure 1" in [batageljm01] for discussion about how to decode this output from triadic_census() based on the 16 possible forms of triads.

In this case, we see many occurrences of 021D and 021U triads, which is expected in a bipartite graph.

Centrality and connectedness¶

Let's make good use of those bipartite sets for filtering results from other algorithms.

Some of the ingredients are used more frequently than others. In very simple graphs we could use statistical frequency counts to measure that, although a more general purpose approach is to measure the degree centrality, i.e., "How connected is each node?" This is similar to calculating PageRank:

results = nx.degree_centrality(nx_graph)

ind_rank = {}

for node_id, rank in sorted(results.items(), key=lambda item: item[1], reverse=True):

if node_id in ind_nodes:

ind_rank[node_id] = rank

node = subgraph.inverse_transform(node_id)

label = subgraph.n3fy(node)

print("{:6.3f} {}".format(rank, label))

0.745 ind:AllPurposeFlour

0.667 ind:ChickenEgg

0.620 ind:Salt

0.576 ind:Butter

0.518 ind:CowMilk

0.388 ind:WhiteSugar

0.275 ind:Water

0.212 ind:VanillaExtract

0.059 ind:BlackPepper

0.043 ind:BrownSugar

0.035 ind:WholeWheatFlour

0.035 ind:OliveOil

0.024 ind:AppleCiderVinegar

0.012 ind:Bacon

0.012 ind:Honey

0.008 ind:Garlic

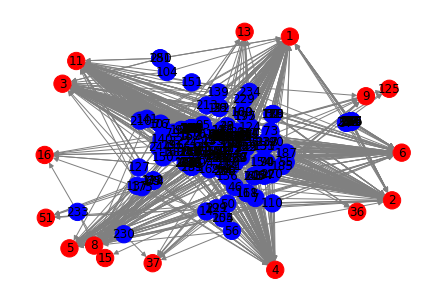

We can plot the graph directly from networkx using matplotlib:

!pip install matplotlib

Requirement already satisfied: matplotlib in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (3.4.3)

Requirement already satisfied: numpy>=1.16 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (1.21.2)

Requirement already satisfied: pyparsing>=2.2.1 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (2.4.7)

Requirement already satisfied: kiwisolver>=1.0.1 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (1.3.2)

Requirement already satisfied: pillow>=6.2.0 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (8.3.2)

Requirement already satisfied: cycler>=0.10 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (0.10.0)

Requirement already satisfied: python-dateutil>=2.7 in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from matplotlib) (2.8.1)

Requirement already satisfied: six in /Users/paco/src/kglab/venv/lib/python3.7/site-packages (from cycler>=0.10->matplotlib) (1.15.0)

import matplotlib.pyplot as plt

color = [ "red" if n in ind_nodes else "blue" for n in nx_graph.nodes()]

nx.draw(nx_graph, node_color=color, edge_color="gray", with_labels=True)

plt.show()

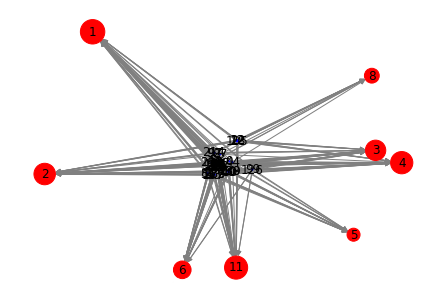

Next, let's determine the k-cores which are "maximal connected subgraphs" such that each node has k connections:

core_g = nx.k_core(nx_graph)

core_g.nodes()

NodeView((0, 1, 2, 3, 4, 5, 6, 129, 8, 11, 12, 18, 22, 25, 28, 30, 165, 38, 167, 40, 44, 53, 54, 60, 188, 68, 198, 78, 81, 83, 85, 86, 87, 88, 214, 215, 217, 92, 97, 99, 103, 105, 235, 239, 247, 126))

Now let's plot those k-core nodes in a simplified visualization, which helps reveal the interconnections:

color = [ "red" if n in ind_nodes else "blue" for n in core_g ]

size = [ ind_rank[n] * 800 if n in ind_nodes else 1 for n in core_g ]

nx.draw(core_g, node_color=color, node_size=size, edge_color="gray", with_labels=True)

plt.show()

for node_id, rank in sorted(ind_rank.items(), key=lambda item: item[1], reverse=True):

if node_id in core_g:

node = subgraph.inverse_transform(node_id)

label = subgraph.n3fy(node)

print("{:3} {:6.3f} {}".format(node_id, rank, label))

1 0.745 ind:AllPurposeFlour

11 0.667 ind:ChickenEgg

4 0.620 ind:Salt

2 0.576 ind:Butter

3 0.518 ind:CowMilk

6 0.388 ind:WhiteSugar

8 0.275 ind:Water

5 0.212 ind:VanillaExtract

In other words, as the popular ingredients for recipes in our graph tend to be: flour, eggs, salt, butter, milk, sugar – although not so much water or vanilla.

We can show a similar ranking with PageRank, although with different weights:

page_rank = nx.pagerank(nx_graph)

for node_id, rank in sorted(page_rank.items(), key=lambda item: item[1], reverse=True):

if node_id in core_g and node_id in ind_nodes:

node = subgraph.inverse_transform(node_id)

label = subgraph.n3fy(node)

print("{:3} {:6.3f} {:6.3f} {}".format(node_id, rank, page_rank[node_id], label))

1 0.082 0.082 ind:AllPurposeFlour

11 0.072 0.072 ind:ChickenEgg

4 0.066 0.066 ind:Salt

2 0.062 0.062 ind:Butter

3 0.055 0.055 ind:CowMilk

6 0.042 0.042 ind:WhiteSugar

8 0.034 0.034 ind:Water

5 0.022 0.022 ind:VanillaExtract

Exercises¶

Exercise 1:

Find the node_id number for the node that represents the "black pepper" ingredient.

Then use the bfs_edges() function with its source set to node_id to perform a breadth first search traversal of the graph to depth 2 to find the closest neighbors and print their labels.

Exercise 2:

Use the dfs_edges() function to perform a depth first search with the same parameters.

Exercise 3:

Find the shortest path that connects between the node for "black pepper" and the node for "honey", then print the labels for each node in the path.